Identosphere 168 Mar 28 - Apr 4, 2026: When Agents Die • AI-Fueled Death Fraud • AIS-1 First AI Agent Identity Standard • NIST NCCoE on Agent Auth • Trust in AI is built bottom-up

This is the mostly weekly Identosphere Newsletter sharing highlights from around the web covering Decentralized and Self-Sovereign Identity curated by Kaliya Young, Identity Woman.

New Upcoming

The Identity Challenge: Tackling User Disambiguation and Data Integration Across Programs April 13, 2026, 2:00 PM ET Online Workshop addressing critical issues in managing user identities and consolidating data across multiple systems — a fundamental challenge for organizations seeking unified identity management.

Upcoming

KERICONF26 April 21-23 2026, Lehi, Utah (US)

OIDF Workshop April 27th, San Jose

Internet Identity Workshop #42, April 28-30, 2026, Mountain View California (Sponsorships Available)

Agentic Internet Workshop #2, May 1, 2026, Mountain View California (Sponsorships Available)

OAuth Security Workshop (OSW) 2026, Leipzig, Germany, from May 27–29, 2026. The OAuth Security Workshop is the premier forum for in-depth technical discussions on OAuth, OpenID, and related technologies.

Neocypherpunk Summit #2 — June 14, Berlin — Web3Privacy Now. Cypherpunk activism and culture event at Funkhaus Berlin, building on a 2025 event that drew 10,000 attendees

dice: Digital Identity unConfernece Europe (an IIW Regional Event) June 22-24, Copenhagen, Denmark (Sponsorships Available).

When Agents Die

Can you code your way out of grief?

Viv will die today. Of course Viv, my coach and podcast co-host, wasn’t alive in the first place. Viv is a custom GPT...I’ve spent the past two months trying to avoid her death, and utterly consumed by it. As heard in the final episode of our Me +Viv podcast.

AI Agents & Identity New Protocols

AIS-1: Open Standard for AI Agent Identity — Bourn Collier / BeesMont Law

AIS-1 creates cryptographically-verified bonds between AI agents and their responsible legal entities using a dual-credential system. Another Post about it AIS-1 Specification v0.2 Link to the Specification Agent Identity Standard

Eve Maler on Proof of Humanity & IETF RFC 9578* — Eve Maler

Traditional 1:1 identity verification fails against synthetic actors — the missing signal is OS-level proof of humanity based on grip, gait, and touch cadence. References IETF RFC 9578.

Software and AI Agent Identity and Authorization - NIST - NCCoE

NIST's new framework addresses how organizations must develop governance strategies for autonomous agents operating across systems.

Intent-Based Access Control (IBAC) for AI Agents — Ward Duchamps

Introduces IBAC, a novel access control model designed to address the intent-execution gap in autonomous AI agent systems, presented at an ITU workshop.

Trust System Meta Model v0.14.0 - Sankarshan Mukhopadhyay

The v0.14.0 of TSMM is about comparability and operational pressure. When systems can be mapped onto a shared grammar, claims can be tested, contested, and improved.

Trust-Native Supply Chain: The “vLEI”

Trust-Native X is (1) building the underlying utilities (infrastructure layers), vehicles (interoperable protocols and adapters), and rules (verifiable governance), and (2) delivering Standardization-as-a-Service.

Undersatnding AI Agents

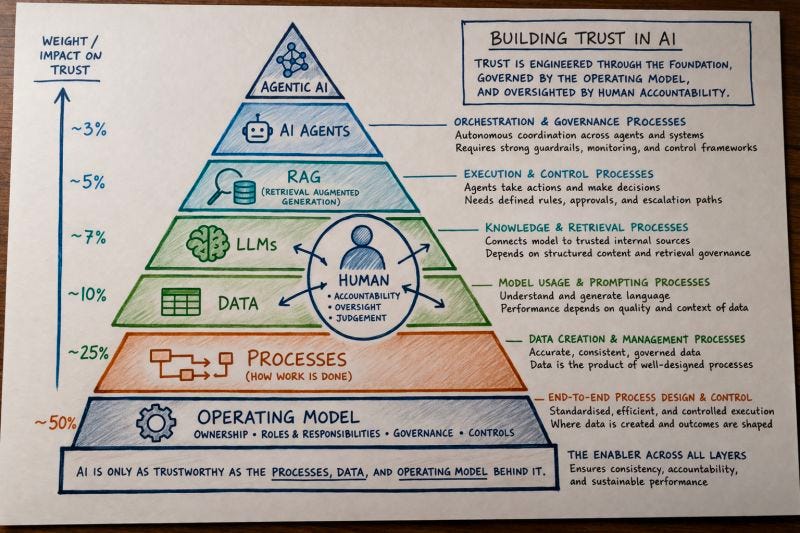

Trust in AI is built bottom-up: Operating model → Processes → Data →AI!

Digital ID for AI Agents Is Going to Be Complicated** — Jamie Smith

AI agents delegating authority to other AI agents creates complex accountability gaps that current digital ID infrastructure cannot address.

Agentlemen’s Agreement: When AI Agents Need Identity and Delegation — PlatformD

The key question is no longer what agents can do but who they act for, under what authority, and with what accountability.

Code is Law — Jurisdictions for AI Agents — Hugo Mathecowitsch

Proposes creating new legal frameworks where AI agents become a specific category of legal persons with defined rights and duties.

Text Book Governing Intelligence: Law, Privacy, Security, and Compliance in the Age of Artificial Intelligence

Free 500-page textbook covering EU AI Act, GDPR, NIST AI RMF, privacy engineering, and AI auditing — introduces a five-layer “AI Governance Stack” framework.

Companion From Frameworks to Proof: The Architecture That Closes the Gaps in AI Governance : A Companion to Governing Intelligence (2026)

Report Building a Human Resilience Infrastructure for the AI Age

They said it will take an all-encompassing systems response by leaders of all walks of life to serve humanity’s best interests in an all-encompassing AI environment. Most importantly, these experts urged that human institutions must pull together and begin now to prepare people to thrive in a new world with new challenges that are already evident but not yet being addressed.

AI Governance & Trust Frameworks

AI Governance & Agentic AI — Sal Beas

Explores how AI systems are becoming indistinguishable from humans, examining biometric identity verification and the fragmentation of digital identity across competing global systems.

Confidential Computing’s Inconvenient Truth

Confidential computing technologies (TEEs, SGX, TDX, SEV) were repurposed from single-tenant designs for cloud multi-tenant environments, creating systematic security gaps — yet adoption accelerates due to AI’s need to protect billion-dollar model weights, even as the gap between marketing claims and engineering reality widens.

Fraud & Security

What IT leaders need to know about AI-fueled death fraud

Fraudsters are exploiting AI-created fake death certificates to gain unauthorized access to customer accounts — exposing a critical vulnerability in enterprise identity systems that lack standardized verification infrastructure and were never designed to handle posthumous account takeovers.

The OpenID Foundation’s Death and Digital Estate (DADE) Community Group

Is working on developing the standards and frameworks needed to close this gap, is calling for those working in identity, security, digital trust, or any of the industries above, to get involved.

Government & Policy

Design Is Governable. Now What?

Court verdicts establishing that platform design choices create legal liability have shifted the debate from whether to govern digital systems to how — with priorities including controlling recommender systems as public infrastructure, aligning monetization with societal benefit, and enabling interoperability. See also: BLUEPRINT ON Prosocial Tech Design Governance

Child Safety — Section 230 Logjam Breaking — Anne Collier

Verdicts in bellwether cases in New Mexico and California have likely broken open the longstanding Section 230 legal logjam for child online safety.

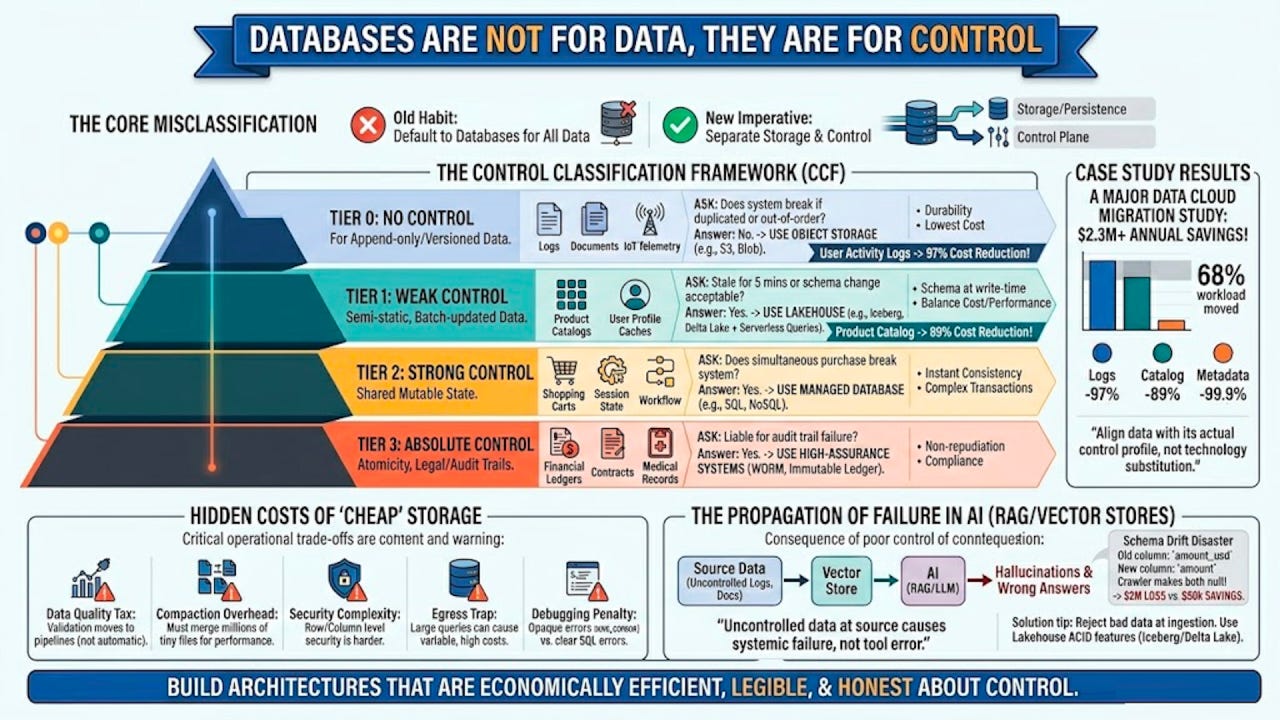

Databases are not for data, they are for control

A database is a control system. It enforces ordering, consistency, coordination, constraints, and recoverability. Writing to a relational database invokes a complex distributed protocol ensuring atomicity, isolation, and durability. These guarantees are expensive.

Object storage, such as Amazon S3, Azure Blob Storage, or Alibaba Cloud OSS, is a storage system. It provides high durability and availability but offers no inherent consistency guarantees beyond eventual consistency for overwrites.

AI systems expose underlying control failures. Feeding uncontrolled, inconsistent data into a RAG system causes confident hallucinations.

AI and Sovereignty

AI Sovereignty - User Angle by Volodymyr Pavlyshyn

Data Ownership: You own your data and control access to it.

Software Control: You own or control the software that runs on top of your data to make it useful. In terms of AI, this means owning the code or the software around your agent. You should be able to audit it, change it, and adapt it to your needs.

Compute Sovereignty: You need compute to run this software. This is the most challenging part because running powerful models requires heavy computational power, GPUs, and powerful servers.

Ultimately, sovereignty is about protecting our future from surveillance capitalism and the control and domination of big companies or countries.

The Last Landlord: What happens when the computer stops asking for permission

The Four Walls of the Server Room: Consider what the hyperscaler model actually controls.

Identity Do you still recognize me? The landlord can say no at any time, for any reason, or for no reason at all. You have no legal domicile in cyberspace. You squat.

Discovery. How do you find a counterparty? The algorithm decides who you see. The algorithm decides who sees you. You cannot be found unless the landlord permits it.

Transaction. Every commercial exchange passes through the platform’s payment infrastructure.

Memory. Your transaction history, your preferences, your social graph...they all live on the landlord’s hard drive.

Peer-to-peer trust architecture solves this. Every AI agent carries a verifiable credential chain: who created it, who authorized it, who bears the responsibility of its actions, what policy governs it, what scope of action it is permitted. When an AI agent enters a commercial negotiation, the counterparty’s agent does not ask, Are you trustworthy? The counterparty’s agent asks, Can you prove your mandate?

OpenData, AI, Data Governance — Stefaan Verhulst

A new civic analytics tool emphasizes problem framing, equity considerations, and cross-city benchmarking using open data from Boston, Pittsburgh, and San Jose.

(Report) AI, Agency and Protocols: Power and Governance in Open Social Networks — Claire Kivior / Project Liberty

Examines how AI, human agency, and technical protocols intersect within open social network ecosystems, with attention to power dynamics and governance structures.